Where is the testing profession heading to? What does it take to get the Devs to do testing? How do we measure the success of Modern Testing? Let’s ask Alan Page!

It’s a pleasure meeting you once again (virtually this time), Alan. How have you been and what are you up to?

Like most people, I’ve been spending a lot of time at home over the past fifteen months. My dog, Terra, has been pretty happy with the situation and has kept me company during a lot of long meetings on Zoom. I’ve been at Unity for over four years now, and currently lead teams that deliver Unity’s service infrastructure, developer tools, reliability engineering, documentation, and quality coaching. Quite a variety of roles, but all stuff that leads to customer’s having a quality experience using any of our app SDKs or services.

Let me ask the most important question before we proceed. Do you like testing? Why if yes and why not if no?

Of course, I like testing. I do it all the time. I’m a discerner, so I evaluate judge, and question things constantly. While on the surface, I do a lot of things that aren’t really “traditional” testing anymore (meaning I don’t spend significant time exploratory testing or writing testing tools), I apply my hunger for knowledge in asking the right questions in order to help coach teams towards delivering better quality.

In the second principle of Modern Testing, we tried to capture this aspect of helping – or accelerating a team’s ability to ship more quickly, and with high quality. Sometimes, but not always, the ‘accelerant’ here is the activity (and output) of testing.

Speaking of which (Modern Testing), could you tell our readers more about it? Where did the idea come from? What made you develop it further? Why is it called Modern Testing?

Years ago, my podcast partner Brent Jensen and I were beginning to see some changes in the way software was developed. As teams moved to Agile, we were seeing teams improve quality and delivery with fewer test specialists, and we were seeing some of those teams see success without dedicated testers. We saw teams use data and monitoring to accelerate their delivery of quality software.

We also saw teams making mistakes as they moved down this path.

We began our podcast as a way to help testers and teams navigate the changes we were seeing. Eventually, we began to refer to it as “Modern Testing” – even though it’s not really about testing, and it’s not really that modern. We just called it that to differentiate it from more “traditional” methods where testing was done late, and often by a separate – and sometimes isolated team.

Eventually, we came up with a set of Principles to describe what we were talking about. They’re based on what we were seeing as well as some of the books we were reading and that we found helpful (e.g. The Lean Startup by Eric Ries).

The very first time I spoke about the modern testing principles in public, I was afraid people would get scared, or I thought at the very least they would disagree. I was surprised that a bit of the opposite happened when several people approached me and told me I had just given a name to the way their team worked. Since then, dozens, if not hundreds of people have told me the same thing.

You have been a musician too and have been working in the engineering field. What is easier? Composing music that people would love or building software that people would use happily?

When I studied composition, I studied the history of a lot of composers, including a lot of the more avant-garde 20th-century composers. John Cage wrote a lot of great music that I like a lot, but he’s probably most famous for his 4’33”, which is 4 minutes and 33 seconds of silence, where the performers are instructed not to play their instruments during the entirety of the work’s three movements. You could argue, “that’s not music!”, but Cage’s intent – like a lot of the other avant-garde artists of the time was that whether it’s a musical score, a painting, or whatever – the art is the audience’s reaction to the medium. If the audience chooses to fidget in their seat, clear their throat, or yell obscenities and leave, they’ve had a reaction, and, in a sense, art has been made.

What does this have to do with the question? Well, I’d say that composing is more difficult. For most of the software I work on these days, the feedback loop from idea to customer feedback is pretty quick. Iterative and adaptive software development gives us a big advantage in making software that people use happily. There are opportunities for a little of that same style feedback in composing music – I often would write something and then convince an ensemble to play it for me and offer some feedback – but usually around orchestration or idiomatic traits of the instruments – e.g. “that part in the middle felt awkward to play”, or “I feel like the trumpet overpowers the flute in the coda”. That’s all great feedback that helped me improve compositions, but ultimately, those musicians aren’t the ones who ultimately need to react to my art. It’s much harder to get a room full of music enthusiasts to come to iteration after iteration of a piece of music and give me quantitative feedback on improving it.

They say culture eats strategy for breakfast. Do you think organizations’ business models (or revenue models) eat testing culture for breakfast too?

I’m pretty sure the “they” in this case was Peter Drucker – who meant that the way your org works (the culture) will have far more influence on the outcome than strategy (or tools, for that matter).

I don’t know if the same is true for business models and testing culture. In fact, I could argue that a culture of quality (which includes more than just testing) has a significant impact on business and revenue goals. It’s the people that execute the strategy and processes – and the way people work that drives business revenue – not the other way around.

There are a lot of references to the cost of quality, but I believe that a good approach to quality and testing is a business advantage. When the entire team is focused on testing and quality, products ship more quickly and require less re-work – making them cheaper to build in the long run. Furthermore, the tight feedback loops (which come from having the ability to update more often), give you a much better chance of solving your customers’ problems in a way they enjoy.

You have been in the industry for a long time and have been in senior positions too. When it comes to making a trade-off, why is it that it often happens at the cost of quality and then naturally at the cost of software testing?

As I mentioned in my previous answer, I don’t think testing has to be a cost. I agree that it is often a cost though – especially when testing is done mostly at the end of the project or by a team isolated from the team developing the software.

I think teams choose to cut on testing because the way they’ve planned for it is expensive. I think if the whole team is involved in testing as a forethought then it’s difficult (or impossible) to cut costs by sacrificing testing. It’s just when testing is bolted on late, or approached as a separate phase that it gets expensive and easier to cut.

With all the new tech and tools at our disposal, do you think the software testing industry is inclining a lot towards the “engineering” aspect of it and ignoring the “craftsmanship” part? How do you suggest we find the right balance between the two?

Time for my obligatory Robert Pirsig reference. In Zen and the Art of Motorcycle Maintenance, Pirsig says (paraphrased) that care and quality are two sides of the same coin. If you look at the companies who are successful with software right now, most of them care a lot about their customers, and how their software is made. Assuming that “craftsmanship” and “care” are the same thing, there’s definitely room (and necessity) for a balance, but I think what’s needed is much more of a blend than a balance.

Tech and tools definitely play their part. The tools teams use for code analysis and security analysis help teams get code correctness right much more often. On the other end of the spectrum, observability toolsets are making it easier for teams to understand how their software is used in production. Some of the automation tools are making it easier for the whole team to create stable and valuable tests quickly – giving the team time to spend time on deeper testing or analysis. The “tech” is making it easier for teams to put care into the software they deliver.

You have been a strong promoter of “whole team testing” especially the “developer testing” idea. Though I have some strong disagreements especially with the myth of mindset part, looking at your accomplishments it seems the idea worked very well for you. What are some critical elements or pre-conditions in your experience that must be present/fulfilled to succeed with developer testing?

I suppose I need to lead by saying that it’s worked well for me with hundreds of developers and at two different companies.

With that out of the way, the most critical element is accountability. I frequently see someone speak out against developer testing by saying that developers don’t want to test, or don’t feel like testing is their job, or don’t feel like they can do testing. On the teams I work with, I don’t give them a choice. I treat every development lead as I would have treated a test lead years ago. I tell them that they are responsible and accountable for the testing and quality of the software they deliver and give them enough information to get started, and an offer of help and advice any time they need it.

I – or a few test experts in my team offer to coach and consulting to help them improve their testing ideas. Frequently, we’ll ask them to write test plans (which we happily critique), or brainstorm on test ideas for a new feature.

In the beginning, most developers learning testing are pretty similar to any new tester. They have a lot of unconscious incompetence in regards to testing, and don’t think a lot about the testing they need to do. Sometimes they make mistakes, but they learn from those mistakes and get better. As a lot of us did when learning testing, they eventually get to a stage where they realize there’s more to learn about testing than they can ever learn, but by that time, they’ve realized that continuous learning is the key to their success.

In short, tell them that they’re accountable for testing and quality, and then give them enough help to be successful.

For organizations or industries where the idea of good-enough testing can be suicidal, do you think developer testing can still succeed there? What do such industries and organizations need to do differently to achieve that?

I think every developer – regardless of industry – should perform testing. They should create thorough unit tests at a minimum, but typically larger-scale tests as well.

I could argue that the bar for “good enough” testing changes with context – but maybe you’re trying to get my opinion if dedicated testing professionals are still needed on software where errors may cause lives or massive amounts of loss. That answer depends on a lot more context, but I’ll put it this way. Whether you are making an 8-bit mobile game for children, or a software engine control for a lunar lander, your goal is to make sure that you are identifying and mitigating risk to your customers’ success. The risk with the game is low, so developer testing is more than sufficient. For the lunar lander, the business should determine whether developer testing is enough. I don’t know those developers, and I don’t work in that business, but my strong hunch is that they’d need a few more sets of eyes with critical thinking, systems thinking and some domain expertise to get to a level of risk the business is comfortable with.

Most of us work on software with a risk factor somewhere between those two examples. Like in those examples, our context (developer experience, risk factors, business goals, etc.) all dictate how we want to approach software testing and quality.

One of the principles of Modern Testing mentions that under certain contexts, teams may work without any dedicated tester. Are there any contexts where you think testers must be involved in teams?

This is answered partially, at least, in the previous question. But – this is a good place to point out that blindly removing testers from a team because “some company did it and it worked” is a really bad idea. If you don’t have a culture that supports developer testing, or if the developers don’t have the testing knowledge to properly assess and mitigate risk through testing, your team absolutely needs testers.

However – I also believe that with the proper level of training and quality culture that there are few industries or projects where dedicated testers would be required.

Typically in tech organizations, the quality is regarded as product quality and engineering quality. That apparently explains programming teams’ inclination towards engineering quality. More often than not, this also results in the programming team not feeling responsible for product quality as such. How do teams solve this problem if there are no dedicated testers who are often the connecting link between both aspects of quality?

I feel like this is a bit of a strawman. In a vacuum, I agree – developers tend to focus on engineering-facing quality because it’s in their face. Compiler warnings, static analysis, code coverage, velocity and other measurements of developer quality are built into so many of the tools and processes used by teams, it is certainly easy for them to focus there.

The previous question mentions the seventh Modern Testing Principle. It’s often misunderstood, so let’s take a closer look.

We expand testing abilities and know-how across the team; understanding that this may reduce (or eliminate) the need for a dedicated testing specialist.

This principle is documenting what we’ve seen in many software organizations (note: the Modern Testing Principles are documenting what we’re already seeing; not what we want to happen, or think should happen). What we’ve seen is that as development teams embrace more and more aspects of testing, that they may get to a point where they don’t need dedicated testing specialists anymore. They don’t get rid of testers for the sake of getting rid of testers – they just find that they can reach the levels of quality they are striving for without a dedicated tester. Most often, the testers who helped them get to this stage remain on the team in other roles. Often, they’ll move into a new role, but occasionally move back into a testing or quality role if or when needed.

The concept of Modern testing exists so that ” we can help testers navigate changes happening across the industry. I guess a measure of its success would be for organizations to understand better how testing changes as they move to a world of quicker releases where quality is based on near real-time customer feedback.

Oh, Alan, I would have liked to be wrong about my experiences which made me ask the previous question. More often than not, I met Devs/ architects/Engineering leads who always shrugged things off blaming it all on the “product” team. Anyway, what contribution do you expect from testers in the team especially after the team switches to the whole team testing idea?

I will assume you’re talking about an embedded tester on a team who have (or state they have), “whole-team testing”. In this case, I like for the testers to drive the test strategy and planning, and pair with developers to write test strategies, test plans, or test charters for their components. They should have a heavy hand in defining what done” looks like. Given that testers are often the best systems thinkers on the team, they can also drive improvements through facilitating retrospectives and making sure that the team takes opportunities to learn from any setbacks they encounter.

Of course, they can (and should) do some testing too and use whatever they find to help refine and improve the team’s test planning in the future.

I could be wrong but people who are not a fan of investing much in testing often argue that “customers don’t care about testing”. Do you think customers care about development either or the tech stack/ tools we use or the best programming practices we follow or how much automation we have in place for that matter? As long as they get the quality product (or anything that solves their problem) at an affordable price in the minimum possible time, I guess they care about nothing mentioned above. Is it really because testing does not produce a production code, it becomes the natural victim of cost-saving strategies?

I don’t think it matters whether you’re a fan of investing in testing or not – it’s a fact that customers don’t care about testing. They don’t care what kinds of testing you did, they don’t care which tests passed, and they don’t care about code coverage or static analysis.

They don’t even want software. They want their problems solved easily and intuitively.

In modern agile teams these days, testers report directly to the engineering lead/managers who often know nothing much about testing (since they worked only as programmers and there was hardly any whole team testing happening back then). This often results in testers not getting the required support/guidance or even buy-in for their ideas at times. With your experience of leading engineering teams (with and without testers), what would be your advice to such managers?

I think it’s the responsibility of those testers to help those managers – and their teams learn more about testing. I don’t remember where I first heard this, but something I say often is that you can change your manager, or you can change your manager. Meaning, of course, that you can help your manager understand and learn more about the testing and quality side of the business – or, you can find a new manager who’s less dense.

I think leadership and influence are essential skills for testers on Agile teams, and influencing the way your team develops and ships software is a core skill set for the modern tester.

How do we measure the success of Modern Testing?

It’s never something we really thought about – but as I’ve stated before, the concept of Modern testing exists so that we can help testers navigate changes happening across the industry. I guess a measure of success would be for organizations to understand better how testing changes as they move to a world of quicker releases where quality is based on near real-time customer feedback.

What books would you recommend testers to read?

Like a lot of testers, I own a huge stack of Jerry Weinberg’s books. All of those have some value for testers. I’m most partial to The Secrets of Consulting.

I think testers should understand how shipping more often and learning are correlated with software quality, and for that, I highly recommend Accelerate by Nicole Forsgren et. al. and The Lean Startup by Eric Ries.

For systems thinking and the theory of constraints (which are important aspects of shipping quality software), I recommend The Goal by Eli Goldratt, or The Phoenix Project by Gene Kim.

Your message to our readers would be?

There’s a lot of change happening in the way software is delivered. As a tester, you could easily find a way to keep doing exactly what you’re doing, or you could choose to adapt and change and grow along with the industry. With Modern Testing, we’re just trying to help people in the latter path navigate the changes more easily.

You can be the butterfly, or you can be the wind.

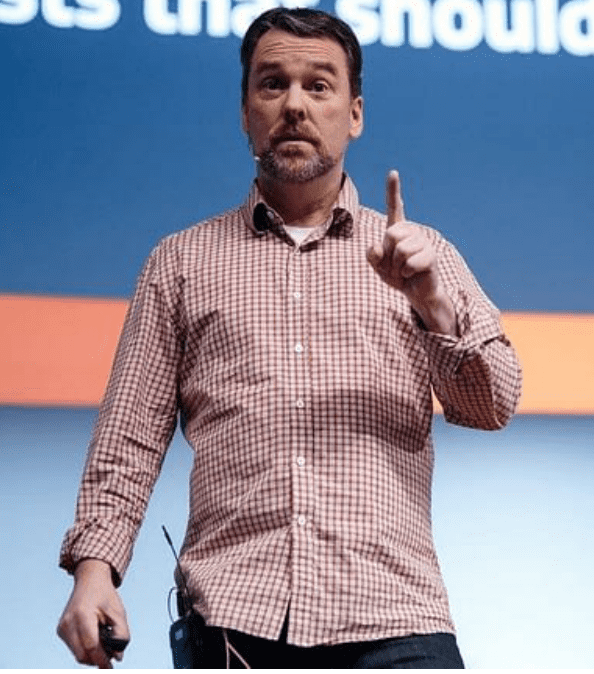

Alan Page

Alan has been improving software quality since 1993 and is currently a Senior Director of Engineering at Unity Technologies. Previous to joining Unity in 2017, Alan spent 22 years at Microsoft working on projects spanning the company – including a two-year position as Microsoft’s Director of Test Excellence. Alan was the lead author of the book “How We Test Software at Microsoft”, contributed chapters for “Beautiful Testing”, and “Experiences of Test Automation: Case Studies of Software Test Automation”. His latest ebook (which is a few years old, but still relevant) is a collection of essays on test automation called “The A Word: Under the Covers of Test Automation”, and is available on leanpub.