The American Heritage Dictionary defines estimation to be:

A tentative evaluation or rough calculation.

A preliminary calculation of the cost of a project.

A judgment based upon one’s impressions, opinion.

As a software engineer, I have a professional track record that includes the ability to consistently make and keep commitments. To consistently make and keep commitments I have developed the ability to judge the effort required to complete my work.

Over the years I have used many different estimation techniques, including individual and team approaches, analytic models, and even Monte Carlo methods.

The estimation method that sticks the most with me is something I call SEBTE, Simple Experience-Based Test Estimation.

Past Data

When using SEBTE I base my estimate on past data.

I try to make it remarkably simple to collect past data. I invest a few seconds per task to collect the information. I find that team members actively participate in the data collection process when the data can be collected, in a seamless, natural way, as part of the normal team workflow.

The data I collect is relevant to the team working on the project. If the team changes, or if the organization changes, or if the business changes, or if the technology being used changes, or if the culture changes, or if the lifecycle model changes, then I will need to recalibrate the estimation from scratch.

There are two points at which I collect data. The planning step and the task completion step.

During test planning activities I collect data associated with each task identified. I collect the SIZE and COMPLEXITY of each task.

About SIZE

variables, or conditions the tester will need to consider when implementing the task. I define size as SMALL, MEDIUM, and LARGE. One way to define SIZING a testing task could be:

SMALL: The task will consider fewer than 5 factors

MEDIUM: Task will consider between 5 and 10 factors

LARGE: The task will consider more than 10 factors

Teams must have a simple clear definition of SIZE. I urge my customers to collect a series of examples of recently completed tasks that illustrate different SIZES. That way when trying to SIZE a new task the team can look at similar recent tasks for inspiration. Although the number and range of SIZE are up to you, I try to keep it to three levels. To me, this is just a simple rule of thumb that has worked well over the past 30 years or so.

About COMPLEXITY

During the planning session, I like to consider the complexity of the testing based on the nature of the changes to the software under test. As with SIZE, I try to define three simple levels of COMPLEXITY named something like SIMPLE, TYPICAL, and COMPLEX.

I might define these complexity levels as follows:

SIMPLE: There has been no change to the program logic

TYPICAL: There has been some change to the program logic or new logic has been added.

COMPLEX: The underlying code is new or has been redesigned or refactored.

It is important that the team have a simple and clear definition of COMPLEXITY. As with size, I urge my customers to collect examples of recently completed tasks that represent examples of SIMPLE, TYPICAL, and COMPLEX tasks.

About TASKS

I have been using the term task quite a bit so far. I call this estimation technique task-oriented because it applies to testing activities which can be divided into a series of tasks.

I consider testing tasks as a chunk of work done by a single tester to learn something about the software being tested. In my experience testing is about learning. I define testing tasks as activities in which the tester is endeavouring to learn something about the software under test.

I identify testing tasks when planning and as I am testing. The task list of a testing project is dynamic and evolves. As I learn more about the system under test, I identify more risks that may be important to explore. Indeed, the best testing ideas I come up with seem to be generated when I am actually testing.

As a rule, I use short phrases to describe testing tasks. The task description is an explicit statement of what I am interested in learning about. I also am incredibly careful never to use the word test as a verb in a description of a testing task. I will never have a task that says, “test feature ABC”, instead I may have one or more tasks explicitly describing what I want to learn about feature ABC. “Confirm feature ABC can processes foreign and domestic transactions” is an example of a task description I might use. I try to keep task descriptions to under 160 characters of text. This is a rule of thumb I derive from my experience over the years using 80 column CRT terminals. I could not always get my testing task descriptions to fit on one line (80 characters) but I could almost always get my testing task descriptions to fit on two lines (160 characters). Today testers would probably prefer the metaphor of a testing task is tweetable.

I generally encourage a work breakdown granularity to the level of tasks that will require anywhere from 1 hour to a couple of days if the feature works well!

Some Popular Types of Testing Tasks

Here are some of the types of tasks I identify:

Capabilities

Test tasks based on what the application is supposed to do. Capability-based tasks focus on confirming that an application does what it is supposed to do. Requirement and functional specifications can be used as a source of capability-based testing tasks.

Failure Modes

Failure mode test tasks are “what if” questions. I ask “what if” something breaks. Failure mode test tasks are often inspired by how a system is designed. I look at all the objects, components and interfaces in a system and ask what if they break or exhibit some sort of unanticipated failure. Failure modes can be the result of harshly constrained system resources or forced error conditions.

Quality Factors

Quality factors are characteristics of a system that must be present for the project to be successful. Quality factors are the “ilities” including usability, reliability, availability, scalability and maintainability, Quality factor test tasks often involve experiments to determine if a quality factor is present. Examples include performance, load, and stress testing.

Usage Scenarios

A usage scenario test task challenges whether a user can achieve their tasks with the software under test. To paraphrase the Kennedy inaugural address – we ask not what the software does for the user but rather we ask what the user does with the software. Usage scenario-based test tasks involve identifying who is using the system, what they are trying to achieve, and in what context.

Business Rules

Business Rules are an asset. Business rules can be an excellent source of testing tasks for transaction-oriented information technology systems. Decision tables and process flow models can be a basis for powerful test tasks which can make or break a company. Testing business rules is not just about finding bugs, it is about validating systems to implement core values.

Combinations

When running transactions there are often many factors that influence the processing approach taken by the application. Each factor, or condition, may have several different values and they interact with each other to impact the behaviour of underlining algorithms and processing in the system under test. Varying combinations and permutations of values of variables can help identify bugs related to how multiple factors are processed with a reasonable number of test cases.

States

When testing a stateful application a state model helps me come up with test tasks. For example, a transaction goes through many states of existence from creation through approval, payment, and delivery. I use state models to identify test tasks such as getting to states, exercising state transitions, and navigating paths through the system.

Data

Data is a rich source of testing tasks. Data flow paths can be exercised. Different data sets can be used. Data can be cooked up and build from combinations of different data types. Stored procedures can be verified. Test tasks can be developed to create, update, and remove any persistent data.

Environments

Exploring how the application behaves in different operating environments is a rich source of testing tasks. Environment test tasks can relate to varying the platform, hardware, software, operating system, locale, co-resident third-party software, and locales.

Internationalization and Localization

When products are developed for the international markets a lot of risks come up as part of internationalization and localization.

Internationalization enables products to support multiple languages and cultures.

Localization is creating a locale-specific version of the product.

There are enormous risks along the path to addressing the global market related to processing data, business rules, and literally the complete computer-human interface.

Unit Test

Unit Test tasks are derived from the technical work programmers do to build the software being tested. Unit test tasks can look at the structure of the software and the interaction between components, modules, objects. Methods, classes, stored procedures processes and other elements used to construct software.

Test Oracles

Test Oracles are strategies to assess correctness. Many different tools and techniques exist to help assess correctness. Some oracles are documents such as classic IEEE 830 style requirement documents. Other oracles are problem-solving heuristics or rules of thumb that guide domain experts. The method of assessing correctness may suggest testing tasks that can confirm or contradict the hypothesis that the application is working correctly.

Creative Tasks

Creative test tasks come from many sources. I often use deliberate lateral thinking techniques (For example Six Thinking Hats from Edward De Bono) to come up with cool and effective tests. I also use metaphors to come up with testing tasks. I wonder what would happen if the Tasmanian devil used the system? Perhaps a Dr. Seuss story inspires some testing tasks? Perhaps Great detectives? Movies? Pretty much anything goes here.

Path Test Tasks

Many applications have a series of capabilities that can be used in a wide variety of patterns, the pathways users use to navigate through a system are a great source of test tasks. There are also workflow paths, data flow paths, control flow paths, and many others. Paths can be a powerful source of test tasks to help identify problems in which one feature interferes with another.

Boundary Test Tasks

Boundary bugs continue to show up in software projects at points where extreme values for variables are selected or at the extreme minimum and maximum points of structures, Boundary test tasks can be suggested from written requirements or any objects in which two or more people must interpret the same technical descriptions.

Automation Test Tasks

Automation is not only a toolset to help in the creative execution and organization of tests. Having tools available to support the testing effort in and of themselves can be a source of test tasks. Imagine what testing you could do with the right tool. This section explores generating test tasks from test automation tools.

Regression Test Tasks

Regression testing dominates continuous integration servers in many agile projects. So much energy is dedicated to making sure we didn’t accidentally break something when we change our code base for whatever reasons. How our codebase behaves in response to change is the topic of regression testing. How can regression testing be implemented, and what should be included in regression testing? This remains an open question and a remarkably interesting source of test tasks.

Collecting Task Data

Whenever a task is completed, I capture the amount of effort which was required to implement the task. I try to make this remarkably simple and done in the same system used to manage work. If I am in an agile team, I ask the Scrum Master to collect the data during the stand-up meeting.

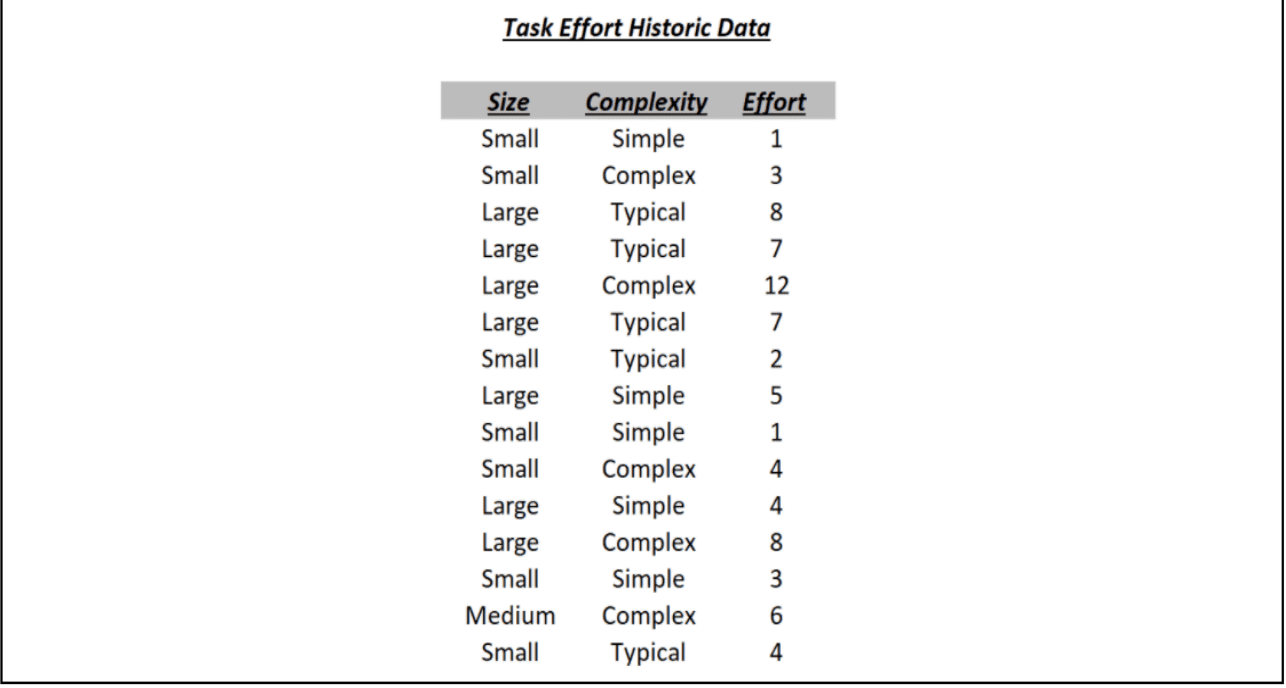

So, the data I have collected for every completed task includes only three simple elements:

SIZE is collected when the task is identified.

COMPLEXITY is collected when the task is identified.

ACTUAL EFFORT when the task is completed.

I place this data in a spreadsheet. One row per data set. The unit of effort I choose to use in the project from that this example is derived is the number of sessions of testing required to complete the task. I defined a session of testing as 90 minutes, plus or minus 10 per cent, of uninterrupted work on a specific task. During a session, the tester is not distracted by email, social media, or any other type of interruptions.

You will note that there are exactly nine types of tasks categorized by SIZE and COMPLEXITY.

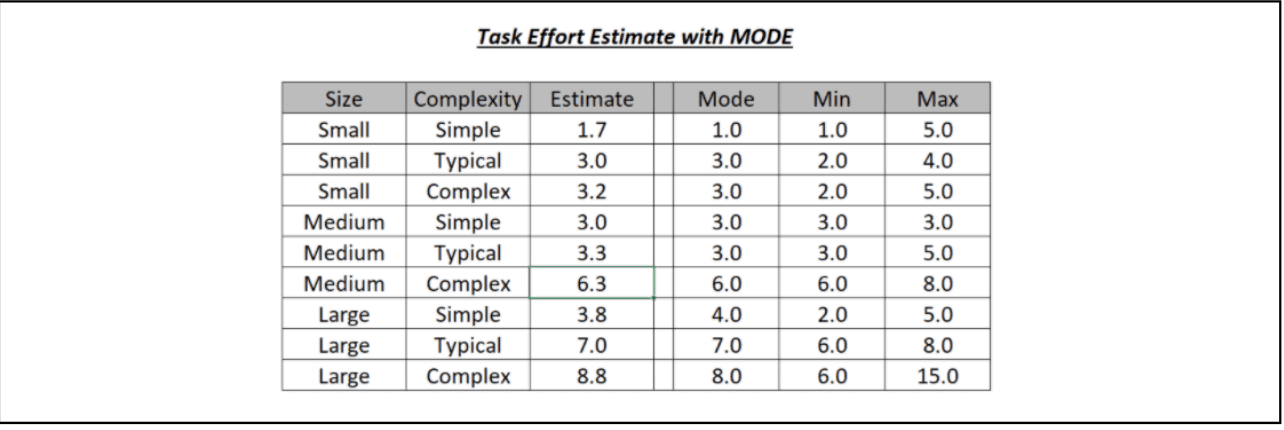

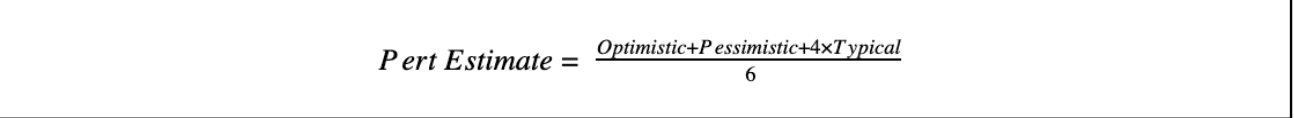

To compute the estimate for each task type I use the Pert Estimation Formula. I learned the Pert Formula in my earliest days as a professional software engineer in the 1980s. The Pert Estimation Formula is taught as part of project management in general and in included in the Project Management Institute Body of knowledge PMIBOK. Pert Formula is simple and practical. It makes use of what are known as three-point estimates. In Pert the estimated effort of a task is computed based on the optimistic (minimum) effort required, the pessimistic (maximum) effort required, and the typical (mode which is the most common) effort required. I rely on spreadsheet table lookups to derive this information from the collection of past data.

Estimating a new task

When a new task is identified I can look up the estimated effort required if I know what the SIZE and COMPLEXITY values are. In the above example if a new task is identified with a SIZE of LARGE and a COMPLEXITY of TYPICAL then I would estimate the effort required to implement the task as 7 sessions of testing.

Using Simple Experience-Based Test Estimation

A couple of years ago I was honoured to receive an email from a client who shared their experiences using SEBTE for over 10 years of projects at a major financial services company. The method stood the test of time being applicable across dramatic changes in lifecycle models and technologies. The method was trivial to implement and was easily recalibrated whenever context factors changed, or teams were restructured or restaffed.

When Not to Use Simple Experience-Based Test Estimation

I have only been able to apply SEBTE to work which can be broken down into tasks related to what the tester is being asked to learn about. If I cannot break the project down to tasks, then I cannot use SEBTE.

What Simple Experience-Based Test Estimation does not do

SEBTE does not estimate elapsed time. SEBTE does not estimate capital cost. SEBTE does not estimate test coverage. SEBTE does not estimate bug counts. SEBTE does not estimate team size.

Can Simple Experience-Based Test Estimation work with more than two factors?

In most projects, I use two factors for estimating tasks, namely SIZE and COMPLEXITY. I have on some projects used additional factors when it made sense and found SEBTE to be quite extensible. A specific example was on an internationalization project where the factors influencing the effort included SIZE, COMPLEXITY and MULTILINGUAL DATA HANDLING. I estimated and tracked over 430 tasks with this method and was able to accurately estimate to go effort as the project evolved.

How can a team use Simple Experience-Based Test Estimation?

In my experience, the best way to get great test estimates is to consult a group of people who are involved in the project but who have varied roles and diverse perspectives.

I will usually start alone and build a list of testing objectives that will become tasks. I invest about ninety minutes in this activity and derive the testing objectives from sources available to me from the product requirements, design, past similar projects and even the source code.

I then share the list with different individuals from diverse roles such as programmers, testers, product managers, project managers, support staff, system administrators, customers, and users. I ask them to read through the list and come up with similar testing ideas based on their own personal experience and knowledge. Generally, I get a great set of ideas that can easily be ranked and sorted and turned into testing tasks.

Using Simple Experience-Based Test Estimation in turbulent projects

I consider a project turbulent when there are many and frequent changes to context factors. When Business, Technology, Organizational and Cultural factors change frequently it is important to revise estimates.

In turbulent projects, you will need to update the tasks. I urge you to estimate testing effort on project elements in a short time horizon. For example, in one sprint you can estimate all the testing tasks for the stories being implemented but you should probably not estimate beyond the sprint boundaries since that is a moving target.

I have used SEBTE to estimate tasks combined with risk-based prioritization. For risk-based prioritization, the impact of the testing task on business and the likelihood of failure are used to prioritize testing activities. I sort activities by decreasing priority. I can then sum the effort required for all tasks sorted by priority to see how much testing we can complete on a fixed budget. This provides me with useful information to help advocate testing to project stakeholders and also shows different alternatives as to how the testing budget can be spread across product risks.

TO BE CONTINUED…

Rob Sabourin

Rob has more than thirty-nine years of management experience leading teams of software development professionals. A highly-respected member of the software engineering community, Rob has managed, trained, mentored, and coached thousands of top professionals in the field. He frequently speaks at conferences and writes on software engineering, SQA, testing, management, and internationalization. Rob authored I am a Bug! the popular software testing children’s book. He works as an adjunct professor of software engineering at McGill University; and serves as the principal consultant (and president/janitor) of AmiBug.Com, Inc. Contact Rob atrsabourin@amibug.com